Customizable artificial intelligence and gesture recognition

In many respects we think of artificial intelligence as being all encompassing. One AI will do any task we ask of it. But in reality, even when AI reaches the advanced levels we envision, it won’t automatically be able to do everything. The Fraunhofer Institute for Microelectronic Circuits and Systems has been giving this a lot of thought.

AI gesture training

Okay, so you’ve got an AI. Now you need it to learn the tasks you want it to perform. Even today this isn’t an uncommon exercise. But the challenge that Fraunhofer IMS set itself was training an AI without any additional computers.

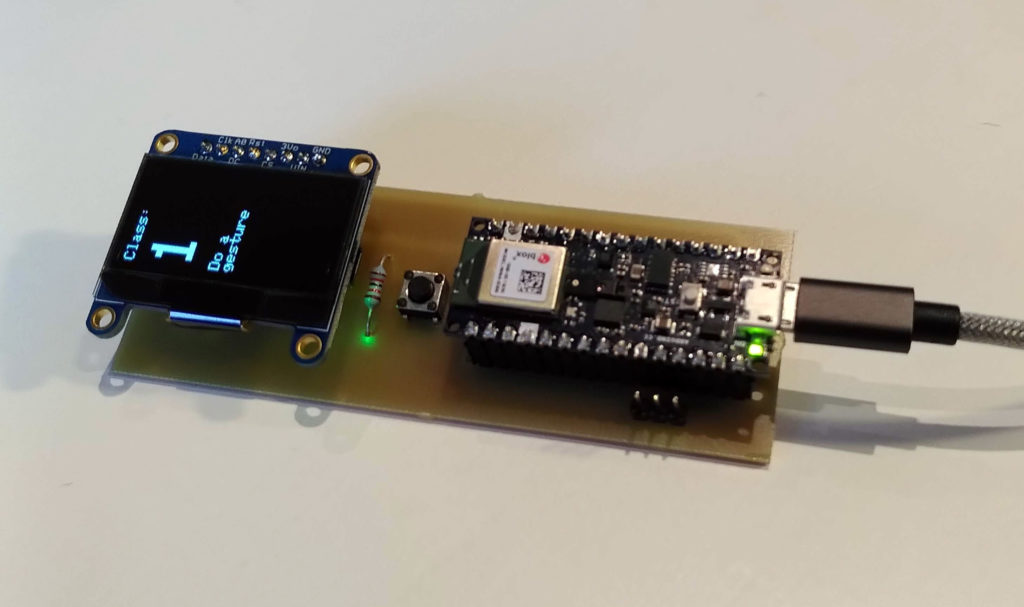

As a test case, an Arduino Nano 33 BLE Sense was employed to build a demonstration device. Using only the onboard 9-axis motion sensor, the team built an untethered gesture recognition controller. When a button is pressed, the user draws a number in the air, and corresponding commands are wirelessly sent to peripherals. In this case, a robotic arm.

Embedded intelligence

At first glance this might not seem overly advanced. But consider that it’s running entirely from the device, with just a small amount of memory and an Arduino Nano. Fraunhofer IMS calls this “embedded intelligence,” as it’s not the robot arms that’s clever, but the controller itself.

This is achieved when training the device using a “feature extraction” algorithm. When the gesture is executed, the artificial neural network (ANN) is able to pick out only the relevant information. This allows for impressive data reduction and a very efficient, compact AI.

Obviously this is just an example use case. It’s easy to see the massive potential that this kind of compact, learning AI could have. Whether it’s in edge control, industrial applications, wearables or maker projects. If you can train a device to do the job you want, it can offer amazing embedded intelligence with very few resources.