Fruit identification using Arduino and TensorFlow

By Dominic Pajak and Sandeep Mistry

Arduino is on a mission to make machine learning easy enough for anyone to use. The other week we announced the availability of TensorFlow Lite Micro in the Arduino Library Manager. With this, some cool ready-made ML examples such as speech recognition, simple machine vision and even an end-to-end gesture recognition training tutorial. For a comprehensive background we recommend you take a look at that article.

In this article we are going to walk through an even simpler end-to-end tutorial using the TensorFlow Lite Micro library and the Arduino Nano 33 BLE Sense’s colorimeter and proximity sensor to classify objects. To do this, we will be running a small neural network on the board itself.

The philosophy of TinyML is doing more on the device with less resources – in smaller form-factors, less energy and lower cost silicon. Running inferencing on the same board as the sensors has benefits in terms of privacy and battery life and means its can be done independent of a network connection.

The fact that we have the proximity sensor on the board means we get an instant depth reading of an object in front of the board – instead of using a camera and having to determine if an object is of interest through machine vision.

In this tutorial when the object is close enough we sample the color – the onboard RGB sensor can be viewed as a 1 pixel color camera. While this method has limitations it provides us a quick way of classifying objects only using a small amount of resources. Note that you could indeed run a complete CNN-based vision model on-device. As this particular Arduino board includes an onboard colorimeter, we thought it’d be fun and instructive to demonstrate in this way to start with.

We’ll show a simple but complete end-to-end TinyML application can be achieved quickly and without a deep background in ML or embedded. What we cover here is data capture, training, and classifier deployment. This is intended to be a demo, but there is scope to improve and build on this should you decide to connect an external camera down the road. We want you to get an idea of what is possible and a starting point with tools available.

What you’ll need

- Arduino Nano 33 BLE Sense

- A micro USB cable

- A desktop/laptop machine with a web browser

- Some objects of different colors

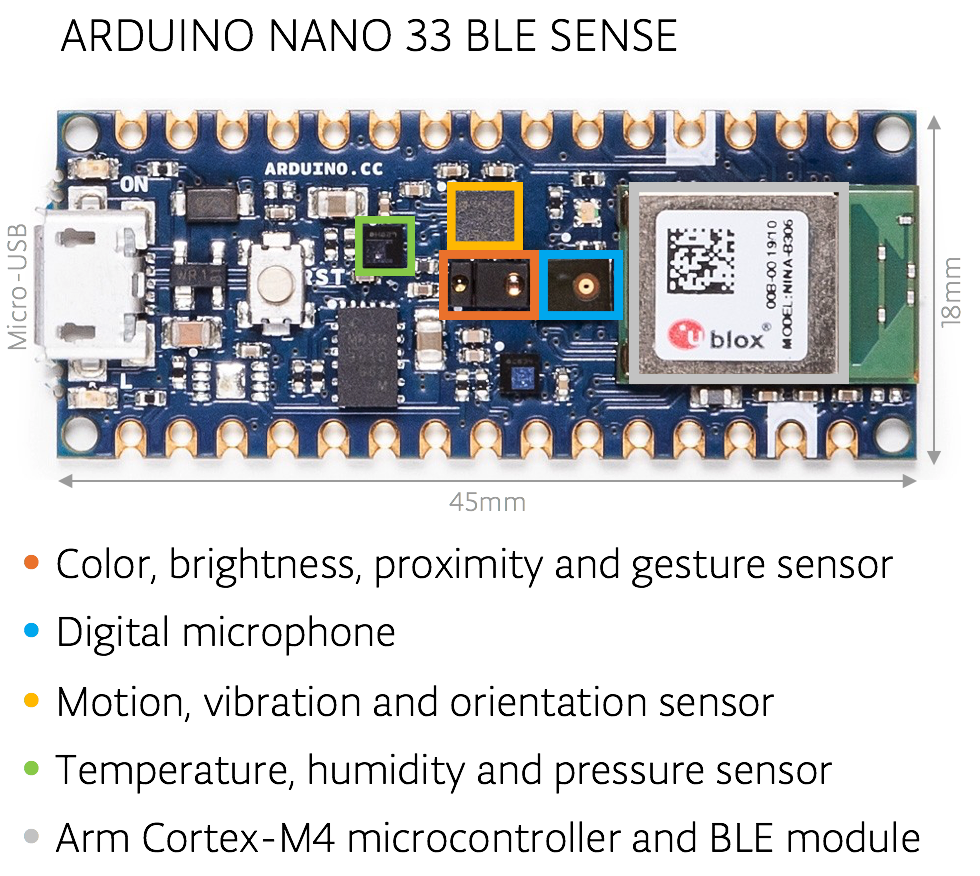

About the Arduino board

The Arduino Nano 33 BLE Sense board we’re using here has an Arm Cortex-M4 microcontroller running mbedOS and a ton of onboard sensors – digital microphone, accelerometer, gyroscope, temperature, humidity, pressure, light, color and proximity.

While tiny by cloud or mobile standards the microcontroller is powerful enough to run TensorFlow Lite Micro models and classify sensor data from the onboard sensors.

Setting up the Arduino Create Web Editor

In this tutorial we’ll be using the Arduino Create Web Editor – a cloud-based tool for programming Arduino boards. To use it you have to sign up for a free account, and install a plugin to allow the browser to communicate with your Arduino board over USB cable.

You can get set up quickly by following the getting started instructions which will guide you through the following:

- Download and install the plugin

- Sign in or sign up for a free account

(NOTE: If you prefer, you can also use the Arduino IDE desktop application. The setup for which is described in the previous tutorial.)

Capturing training data

We now we will capture data to use to train our model in TensorFlow. First, choose a few different colored objects. We’ll use fruit, but you can use whatever you prefer.

Setting up the Arduino for data capture

Next we’ll use Arduino Create to program the Arduino board with an application object_color_capture.ino that samples color data from objects you place near it. The board sends the color data as a CSV log to your desktop machine over the USB cable.

To load the object_color_capture.ino application onto your Arduino board:

- Connect your board to your laptop or PC with a USB cable

- The Arduino board takes a male micro USB

- Open object_color_capture.ino in Arduino Create by clicking this link

Your browser will open the Arduino Create web application (see GIF above).

- Press OPEN IN WEB EDITOR

- For existing users this button will be labeled ADD TO MY SKETCHBOOK

- Press Upload & Save

- This will take a minute

- You will see the yellow light on the board flash as it is programmed

- Open the serial Monitor

- This opens the Monitor panel on the left-hand side of the web application

- You will now see color data in CSV format here when objects are near the top of the board

Capturing data in CSV files for each object

For each object we want to classify we will capture some color data. By doing a quick capture with only one example per class we will not train a generalized model, but we can still get a quick proof of concept working with the objects you have to hand!

Say, for example, we are sampling an apple:

- Reset the board using the small white button on top.

- Keep your finger away from the sensor, unless you want to sample it!

- The Monitor in Arduino Create will say ‘Serial Port Unavailable’ for a minute

- You should then see Red,Green,Blue appear at the top of the serial monitor

- Put the front of the board to the apple.

- The board will only sample when it detects an object is close to the sensor and is sufficiently illuminated (turn the lights on or be near a window)

- Move the board around the surface of the object to capture color variations

- You will see the RGB color values appear in the serial monitor as comma separated data.

- Capture at a few seconds of samples from the object

- Copy and paste this log data from the Monitor to a text editor

- Tip: untick AUTOSCROLL check box at the bottom to stop the text moving

- Save your file as apple.csv

- Reset the board using the small white button on top.

Do this a few more times, capturing other objects (e.g. banana.csv, orange.csv).

NOTE: The first line of each of the .csv files should read:

Red,Green,Blue

If you don’t see it at the top, you can just copy and paste in the line above.

Training the model

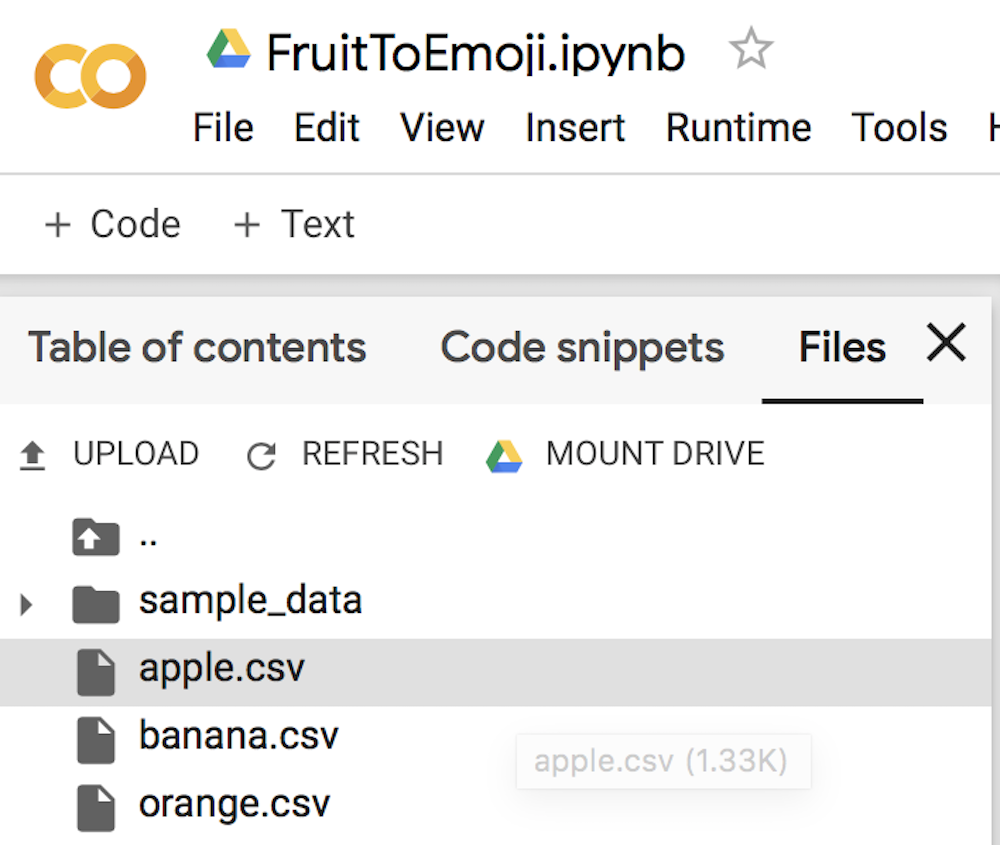

We will now use colab to train an ML model using the data you just captured in the previous section.

- First open the FruitToEmoji Jupyter Notebook in colab

- Follow the instructions in the colab

- You will be uploading your *.csv files

- Parsing and preparing the data

- Training a model using Keras

- Outputting TensorFlowLite Micro model

- Downloading this to run the classifier on the Arduino

With that done you will have downloaded model.h to run on your Arduino board to classify objects!

Program TensorFlow Lite Micro model to the Arduino board

Finally, we will take the model we trained in the previous stage and compile and upload to our Arduino board using Arduino Create.

Your browser will open the Arduino Create web application:

- Press the OPEN IN WEB EDITOR button

- Import the model.h you downloaded from colab using Import File to Sketch:

- Compile and upload the application to your Arduino board

- This will take a minute

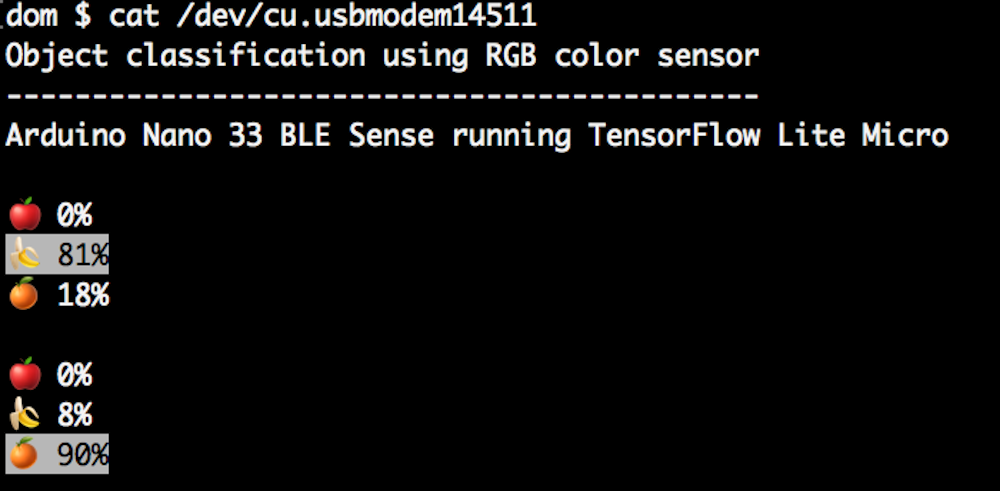

- When it’s done you’ll see this message in the Monitor:

- Put your Arduino’s RGB sensor near the objects you trained it with

- You will see the classification output in the Monitor:

You can also edit the object_color_classifier.ino sketch to output emojis instead (we’ve left the unicode in the comments in code!), which you will be able to view in Mac OS X or Linux terminal by closing the web browser tab with Arduino Create in, resetting your board, and typing cat /cu/usb.modem[n].

Learning more

The resources around TinyML are still emerging but there’s a great opportunity to get a head start and meet experts coming up December 2nd-3rd in Mountain View, California at the Arm AIoT Dev Summit. This includes workshops from Sandeep Mistry, Arduino technical lead for on-device ML and from Google’s Pete Warden and Daniel Situnayake who literally wrote the book on TinyML. You’ll be able to hang out with these experts and more at the TinyML community sessions there too. We hope to see you there!

Conclusion

We’ve seen a quick end-to-end demo of machine learning running on Arduino. The same framework can be used to sample different sensors and train more complex models. For our object by color classification we could do more, by sampling more examples in more conditions to help the model generalize. In future work, we may also explore how to run an on-device CNN. In the meantime, we hope this will be a fun and exciting project for you. Have fun!