Controlling a bionic hand with tinyML keyword spotting

Traditional methods of sending movement commands to prosthetic devices often include electromyography (reading electrical signals from muscles) or simple Bluetooth modules. But in this project, Ex Machina has developed an alternative strategy that enables users to utilize voice commands and perform various gestures accordingly.

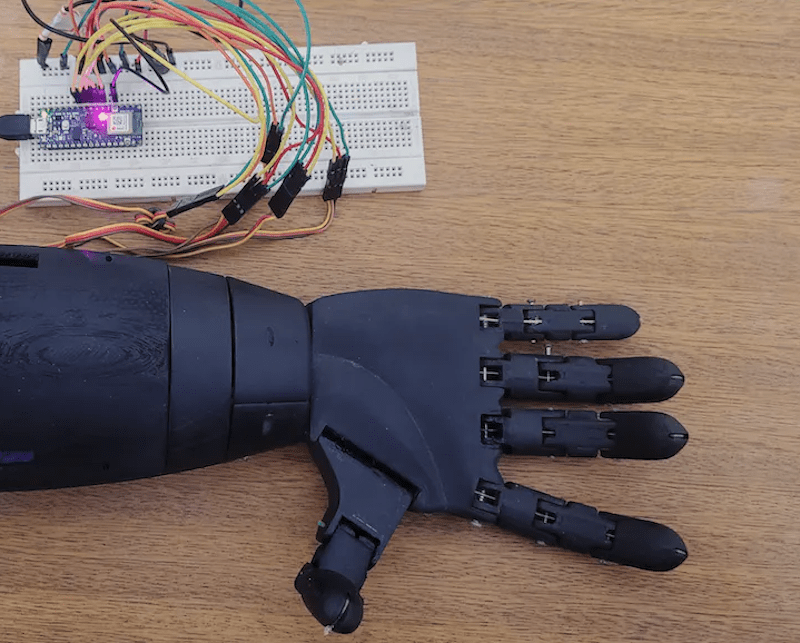

The hand itself was made from five SG90 servo motors, with each one moving an individual finger of the larger 3D-printed hand assembly. They are all controlled by a single Arduino Nano 33 BLE Sense, which collects voice data, interprets the gesture, and sends signals to both the servo motors and an RGB LED for communicating the current action.

In order to recognize certain keywords, Ex Machina collected 3.5 hours of audio data split amongst six total labels that covered the words “one,” “two,” “OK,” “rock,” “thumbs up,” and “nothing” — all in Portuguese. From here, the samples were added to a project in the Edge Impulse Studio and sent through an MFCC processing block for better voice extraction. Finally, a Keras model was trained on the resulting features and yielded an accuracy of 95%.

Once deployed to the Arduino, the model is continuously fed new audio data from the built-in microphone so that it can infer the correct label. Finally, a switch statement sets each servo to the correct angle for the gesture. For more details on the voice-controlled bionic hand, you can read Ex Machina’s Hackster.io write-up here.